Automation

thumbnail

Sponsored Content

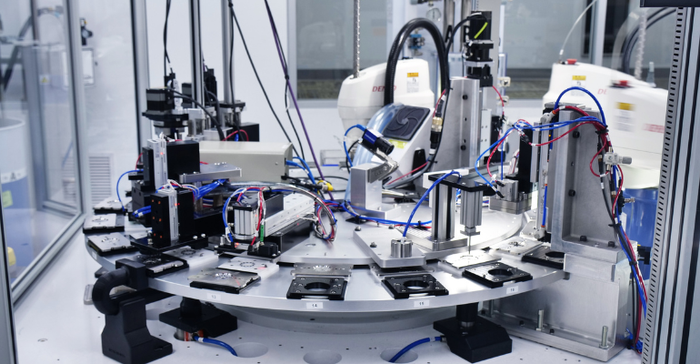

Transforming Healthcare and the Life Sciences Through AutomationTransforming Healthcare and the Life Sciences Through Automation

Precision automation for research, processing & practice

Sign up for the QMED & MD+DI Daily newsletter.

.png?width=700&auto=webp&quality=80&disable=upscale)