Transforming Modern Medical Devices with Machine Learning & AI Inference

Consider these 6 factors when selecting an AI accelerator for your medical device.

The promise of artificial intelligence (AI) technology is finally enjoying commercial success in many industries, including automotive, manufacturing, retail, and logistics, in the form of machine learning (ML) based approaches to tough problems such as computer vision, robotics, and natural language translation and understanding. AI technology adoption is also happening in the medical device industry, and it’s not just driving exciting new features and high-end functionalities into the devices themselves. This technology is also enabling manufacturers to reap the rewards from new service models in which, instead of just selling and supporting devices, they can capture recurring service revenue streams, while at the same time strengthen customer relationships.

Overview of Machine Learning, Models, and Artificial Intelligence

ML is a subset of the larger field of AI in which models are trained via exposure to massive amounts of data. The model uses this data to make a prediction, and if the prediction is correct, the model is strengthened. Likewise, if the prediction is incorrect, the model is then slightly modified in an attempt to improve its accuracy. During the ML training process, these permutations and slight adjustments can occur millions and billions (and trillions) of times, enabling the models to become quite accurate in their predictions. While this training time and effort can be quite lengthy, with some models being trained for months on thousands of machines, the final result is typically a program that can run on a much smaller (although still computationally large) device for what is called inferencing. Inferencing is the term used for using a ML-trained model to produce a predicted result.

As an example, ML and inference has been demonstrated to detect cancer by processing digital X-rays. The process for doing this is to develop an ML model designed to convert X-ray images into segmented images where cancer instances will be highlighted. In the training phase, images of cancers as identified by expert radiologists are used to train the network to understand what is not cancer, what is cancer, and what different types of cancers look like. The more ML models are trained, the better they get at maximizing correct diagnoses and minimizing incorrect diagnoses. What this means is that ML depends on both smart model design but just as importantly on a massive quantity (like hundreds of thousands to millions) of well-curated data examples, where the cancer has been previously identified. By learning from the data, a model develops its own algorithm for identifying suspicious-looking regions and flagging them. AI inference is then used to suggest a result/diagnosis. The result of this effort is a tool that helps medical professionals, in this example, make better and more informed decisions regarding treatment.

The use of ML and AI inference in medical applications has the ability to transform modern healthcare and save many, many lives. For this reason, medical device manufacturers are rapidly exploring ways to incorporate these capabilities into their devices.

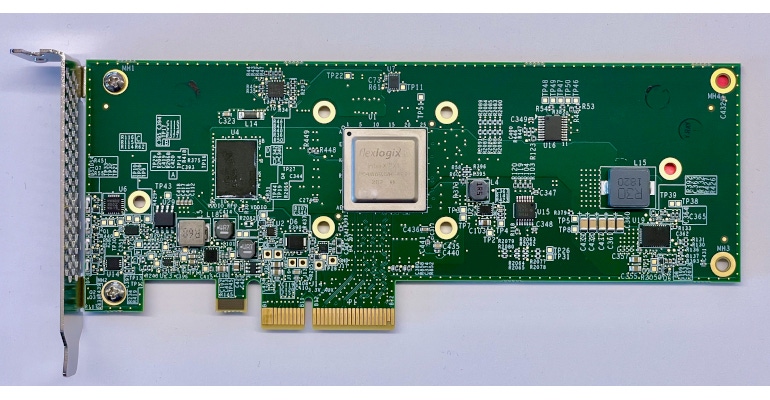

In the past, ML and AI inference capabilities were only available from expensive high-end super computers and large computational systems designed specifically to perform inferencing. Fortunately, with the advent of new semiconductor devices developed specifically to accelerate AI workloads, this technology has now advanced so much that many solutions have come down to price points and form-factors that make it viable for mainstream markets in which AI can be incorporated into a wide range of low-cost systems.

Not All Inference Acceleration Is Created Equal

At the heart of every ML system is the AI accelerator that provides the power to process the massive amounts of data being trained, analyzed, and acted on. However, selecting the right inference accelerator is not always an easy decision for a medical device manufacturer. Chip companies from all over the globe are vying for a piece of this high-growth market, and it’s hard to tell one solution from another, particularly since the industry lacks any real performance benchmarks for useful comparisons. This poses problems for manufacturers who think they are installing an accelerator that performs like a Lamborghini, but find out later it fails to deliver on that performance target, perhaps taking ten times longer to complete its work. This poses serious problems when a physician is relying on the device to deliver fast, reliable, and accurate detection of critical conditions such as cancer.

While there are many factors to consider when choosing an AI accelerator, below are a few key things to focus on.

Performance and throughput. One of the key jobs of an AI accelerator is to process data quickly and efficiently. Most often in the medical field this involves extremely high-resolution images. Take for example an x-ray or CAT scan. That image in the digital form is typically in the 4K-range ( or 12 million pixels) so that you can see the image in total clarity. A lower-resolution image may not show a break of a bone or a suspicious mass. In general, it’s a good rule of thumb to make sure your AI accelerator can easily process high-definition images.

De-noising. AI algorithms can be trained to perform many common functions, such as de-noising. De-noising involves an image being focused or scratches and blemishes removed—and this is an important step. In fact, a medical device customer we are currently working with claims that cleaning up its images can speed up image processing by 5X, providing radiologists with images to view significantly faster than what was previously possible. De-noise processing is not computationally difficult in itself, but the ability to process very high-resolution images quickly can be a barrier to entry, and many AI accelerators simply don’t have the processing power to perform this function.

Flexibility to update models. Most AI accelerators are designed to solve a certain set of vision problems based on approaches that have been commonly used over the last decade. However, this industry is still rapidly evolving, and new approaches that increase accuracy or throughput are being proposed almost every day. While pure software solutions usually offer the flexibility to update approaches over time, solutions that make use of ASIC (application-specific integrated circuits) technology can be very limited in terms of the models they can run. For example, a model may be used to scan for abnormalities in a certain area of the body. However, the device engineers may want to reuse the design for another application requiring a different model optimization. In these cases, it will be significantly less costly and much faster to be able to reuse or leverage existing hardware with a new model than having to redesign the complete system with a different AI accelerator for a different application. That is why it’s imperative to look for an AI accelerator solution that offers flexibility to support evolving AI models in the future.

Go with a specialist. Just like you go to a heart doctor for a heart problem or a neurosurgeon for a brain issue, you should go to an AI inference vision provider with that specialty focus as well. Make sure the company you are getting your vision inference solution from is focused only on that area of expertise.

Price/performance. Medical device manufacturers need optimized solutions that allow them to scale their product. Current solutions, which are often based on GPU technology, are suitable for high-end systems, but price themselves out of the mainstream market because they are simply too expensive. Fortunately, new solutions designed specifically for accelerating AI inference workloads that offer significantly better price/performance than GPU approaches (which were designed for computer gaming applications) are now available.

Processing at the edge. In the modern data processing industry, the concept of cloud and edge has become very important. Cloud refers to very large-scale data centers operated by companies such as Amazon and Microsoft. These cloud environments host data and workloads for many companies in very large shared facilities. While these offer great convenience for many users, there are also legitimate concerns with data privacy, especially related to personal medical information. Processing personal medical data at the edge can reduce the volume of data that would be stored in cloud data centers, providing much higher levels of security. That is why it’s important not to ignore processing at the edge and in particular AI processing at the edge.

My Medical Device is Better…and I Get More Revenue?

As mentioned above, taking high-performance AI inference capabilities to mainstream, cost-effective products is not the only advantage of using AI inference accelerator technology. This technology also enables a manufacturer to adopt an as-a-service business model with recurring revenue streams and subscription services. Because the models are always evolving and improving, there will be opportunities to provide improved solutions over time. In the tech industry, this has taken the form of software as a service offerings. This not only provides a recurring revenue stream for the solution providers, but it’s also another opportunity to continually engage with customers to strengthen that relationship.

There are so many opportunities for AI and in particular AI inference technology in medical devices. It is an exciting time as we are seeing the fruits of decades of research in AI moving to mainstream markets and bringing real value to humanity.

About the Author(s)

You May Also Like