Software

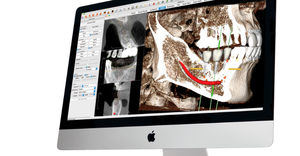

Medical device software development

Software

Pro Tips for Medical Device Software DevelopersPro Tips for Medical Device Software Developers

Hear expert advice on how to innovate while staying ahead of cybersecurity threats in a free webinar hosted by MD+DI and Qmed+.

Sign up for the QMED & MD+DI Daily newsletter.

.png?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)