Materials

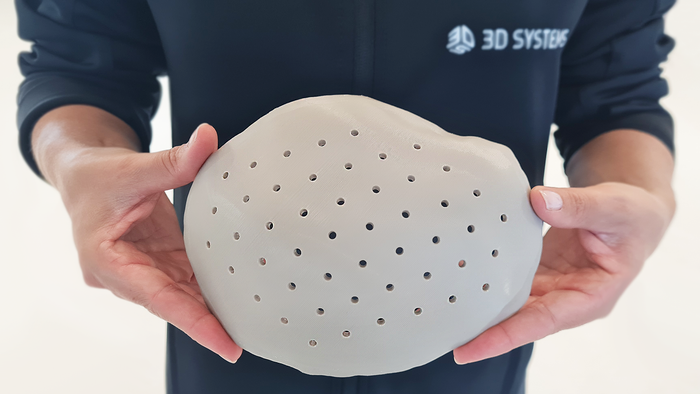

ReadyGo Sampler device

IVD

Porex Partners With UK-based Diagnostics StartupPorex Partners With UK-based Diagnostics Startup

The partnership will scale up production of ReadyGo Diagnostics’ signature device and give it global reach.

Sign up for the QMED & MD+DI Daily newsletter.

.png?width=300&auto=webp&quality=80&disable=upscale)